What I’m trying to do:

Download my old data for backup and to clear more space

What I’ve tried and what’s not working:

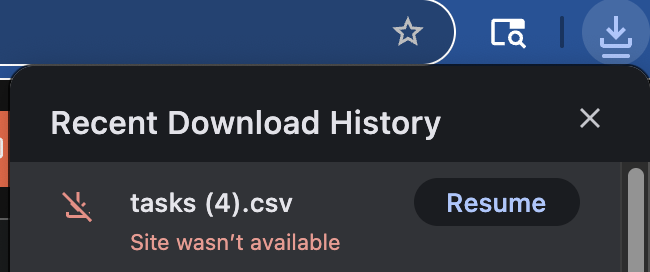

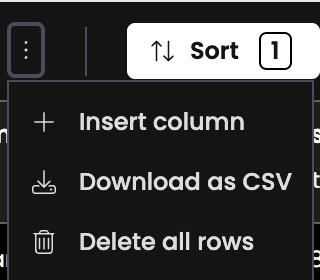

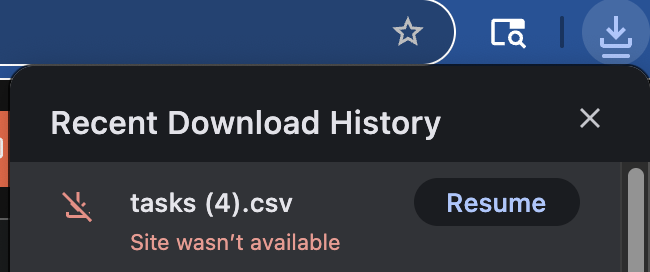

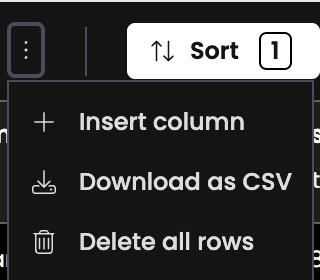

Whenever I try to download large table using client code or tables UI , I get this error:

Code:

def downlaod_tasks(self):

rows = app_tables.tasks.search().to_csv()

anvil.media.download(rows)

UI:

How to download my large data table ( ~ 900k rows) ?

Follow-up:

I tried another approach to work around the internal error when downloading large tables (~900k rows). Specifically, I used the Uplink to construct a new CSV file by iterating through rows and adding them manually.

This did let me generate a CSV, but I ran into two new problems:

- Some data went missing during the export ({“type”: “dict”, “name”: null, “size”: null}).

- For linked columns (for example, rows that reference another table), I got {“type”: “Row”, “name”: null, “size”: null} instead of the expected values (e.g. #ROW[875660,1714386368]).

So my question is:

What’s the best practice for exporting very large Data Tables (with hundreds of thousands of rows) without hitting errors or losing linked data references?

Getting errors when downloading very large files can be expected, but losing data when using Uplink is not.

Try getting one row and inspect its contents with a debugger. You can either put a breakpoint on a server module after reading a row, or run Uplink in PyCharm or VS Code and check the debugging panel.

Each row object should include a reference to the linked row. You can then use that to retrieve the id of the other row, for example:

row["other_table"].get_id()

That said, since you’re already building a custom download script, it may be more useful to store the relevant data from the linked table directly, instead of just saving an id that isn’t very meaningful on its own.

1 Like

See Backup to SQLite via Uplink for some local Python code to download a database to a local SQLite database. Look later in the thread for versions that work with the current anvil.yaml format.

Restoring that data is another matter entirely, especially fixing up linked references.

3 Likes

Thank you this is very helpful