Is this specific to our server or is this something global?

Have as well some problemes - loading is veryyyyy slow

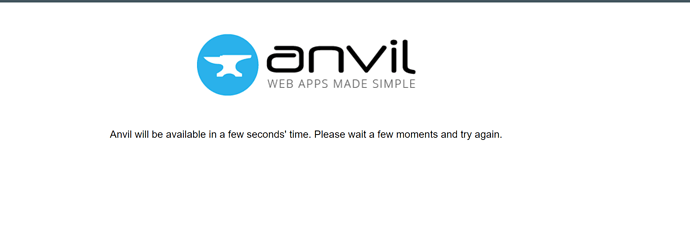

We havent seen speed issues but a lot of confused customer calls about this logo:

Its getting to a point where confused customer calls turn into angry customer calls.

Yes I have been experiencing the same thing. Luckily my tools are in-house to the company. Unluckily, my upper management is NOT happy with the stability of my applications.

Same here. I’ve had intermittent slowdowns and not being able to access apps.

Same here. Constant timeout and server errors today.

I can’t say I’ve had a single problem lately, which is odd, since when everyone else has problems I usually do as well.

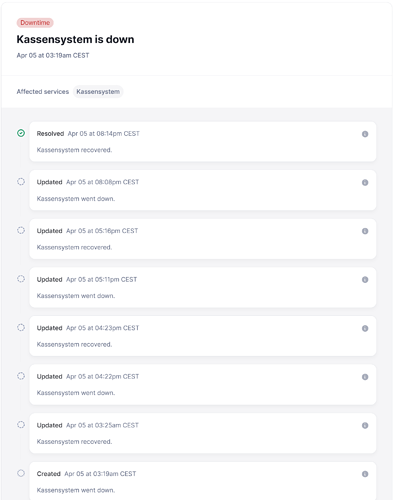

Only one of my uplink logs caught the outages:

(the integers are unix timestamps from right after when the script immediately restarts )

'Connection to Anvil Uplink server lost'

1680616997

'Connection to Anvil Uplink server lost'

1680699796

'Connection to Anvil Uplink server lost'

[Errno 11001] getaddrinfo failed

1680700044

'Connection to Anvil Uplink server lost'

1680782844

'Connection to Anvil Uplink server lost'

Invalid response status: b'503' b'Service Unavailable'

1680783037

1680783038

1680783038

1680783039

1680783039

'Connection to Anvil Uplink server lost'

1680790443

Seeing these little complaints and signs of problems pop up regularly is emotionally painful. I absolutely love using Anvil, but in the end reliability is more important to me than productivity (even though I treasure productivity - if the system can’t be trusted, then productivity goes out the window) ![]() Luckily, for my needs, the open source version currently provides a solution for in-house needs, but in the past I’ve experienced an entire ecosystem I relied upon disappear, and I’m very guarded about ever getting myself into that position again…

Luckily, for my needs, the open source version currently provides a solution for in-house needs, but in the past I’ve experienced an entire ecosystem I relied upon disappear, and I’m very guarded about ever getting myself into that position again…

There was a brief discussion about an ‘LTS’ version of Anvil. I have to wonder if having a system like that in place for production apps is a possibility.

Not everything is anvil related in the end as well, AWS is just plain ![]() sometimes.

sometimes.

At one of the last places I worked we had heavily integrated zendesk email into our customer service workflows and the Europe amazon EC2 cluster/pod that ran our instance of ZD would sometimes go down for hours or days with no resolution in sight other than ![]() from ZendDesk and

from ZendDesk and ![]() from amazon.

from amazon.

Please don’t get me wrong, Anvil seems to provide top notch technical service and the company appears to be fantastically talented. I just see a potential need to look into how to provide more rock solid support for existing apps that are fully working. Some sort of ‘LTS’ system seems to be a useful proposition that could put a lot of users at ease…

Dear Meredydd,

please can we have any sort of infos what’s going on.

I guess it is connected to the release of accelerated tables on April 14th.

Cheers Aaron

My biggest annoyance is that Anvil has yet to make any statements or declare an ETR.

I’m assuming they’re well into their investigation right now. Let’s wait it out. ![]()

my editor is down as well

I haven’t had any issues myself (mostly just editing an app on-and-off over the past 24 hours). But I want to second the concern about that logo popping up. Such a message may be disconcerting to users of apps-in-production who have no idea what “Anvil” is.

Hi folks,

Sorry for being later to this thread than we normally aim for. Suffice it to say that if you’re seeing a 503 error (aka the page with the Anvil logo), something big has fallen over and all the alarms are going off on our end.

This incident should now be resolved, and normal service resumed. If you see anything else, please let us know.

A little more behind-the-curtain detail: We’ve been experiencing escalating load on the main data store that backs the Anvil hosted platform - that includes both platform data (user accounts, apps, etc) and Data Tables data - and it’s caused a bit of platform instability. (Unfortunately, @nickantonaccio, this is a “scaling a growing service” problem rather than a “deployed bad code” problem. And, as I’ll talk about in a minute, the only way out involves shipping more new code, which makes “LTS” not really an option.)

We’re responding by improving our data storage in a few ways, including:

- Shipping performance improvements to improve throughput and remove bottlenecks (for example, we have fully rebuilt how Media is stored in Data Tables, addressing the underlying cause of this outage last month), and several less braggable-about but crucial efficiency improvements;

- Upgrading our version of Postgres to a version with better scalability characteristics for the access patterns we use in Data Tables; and

- More fully isolating shared Data Tables storage from the platform bookkeeping data, so that saturating one does not produce a slowdown in the other.

Doing this on a live service that’s processing many thousands of queries per second feels a little bit like open-heart surgery. We can’t just shut down for the weekend and dump/restore or migrate all of our data. What’s worse – and the proximate cause of recent outages – is that some of these changes require migrations such as “rewriting every Media object stored in Data Tables”. That’s terabytes of data, and this produces a lot of load on the data store (the same data store that’s causing problems by being overloaded). If we slip up (as we did a couple of times today, trying to migrate too much, too fast), it can all go pear-shaped very fast. In the extreme case, enough of our platform can be wedged trying to get a word in with the data store that our load-balancer decides that all the servers are down, there’s nowhere to send your request, and hands out 503 errors for a few moments.

A note to @mark.breuss: Normally, your Dedicated plan insulates you from issues like this. Unfortunately, the “blast radius” of this data store includes parts of the system that your requests pass through on the way to your dedicated server, so you’ve been affected this time. (We’re working on making this more robust and isolated – see above. If you’re on a Dedicated plan, problems in shared Data Tables should not become your problems!)

Finally, an apology to everyone who’s been receiving an earful from their customers, senior management, or anyone else about this. I know that feeling really sucks, especially when the cause is not within your control. This incident should now be resolved, some of the performance improvements I talked about are already kicking in, and we’re racing towards the next Big Upgrade as quickly as we can, which should buy us significant breathing space.

Thank you for the the explanation Meredydd, I hope you’re able to get some sleep sometime soon ![]() When you’re able to breath again - is it possible to distribute anvil geographically (i.e., anvilworks.uk, anvilworks.us, anvilworks.in, etc.), so that traffic coming from various sections of the globe are initially handled by entirely different instances of what is now anvil.works (load balancers and all)?

When you’re able to breath again - is it possible to distribute anvil geographically (i.e., anvilworks.uk, anvilworks.us, anvilworks.in, etc.), so that traffic coming from various sections of the globe are initially handled by entirely different instances of what is now anvil.works (load balancers and all)?

Or perhaps anvillts1.com, anvillts2.com, etc. - at which the back end system experiences a less frequent LTS upgrade schedule - and when you’ve got max users at each domain, each new group of clients who chose ‘lts’ accounts get set up at anvillts3.com, anvilllts4.com, etc. I don’t personally care what URL I have to log into to get to the editor, or if my subdomains work at myurl.anvil.app vs myurl.anvillts1.app… 2 cents…

Hi @meredydd,

thanks for the thorough update on the issue.

I do get scaling issues and I apprecieate the complexity you guys handle.

Needless to re iterate your own words, but the isolation from such outages is top priority for us.

Also the part of me that is not responsible for building a stable software is rooting for you guys since additional load = additional customers ![]()

![]()